Fewer people are prioritizing donations to charitable non-profit organizations, particularly as younger generations enter the workforce. The client engages donors through fitness programs, such as walk/run events, where participants raise money to support its mission. They are in search of ways to enhance the experience of these events by improving the application that registers and hosts attendees, with the goal of encouraging more young people to get involved and feel rewarded for their contributions.

Enhance the user satisfaction to drive engagement, ultimately boosting donations.

Using Jakob Nielsen’s 10 Usability Heuristics for User Interface Design, the application was evaluated for violations to inform improvements. The application was also evaluated for its accessibility using WCAG Accessibility Guidelines.

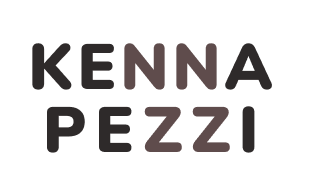

The application had 38 usability violations and 8 WCAG violations. The most prominent findings are summarized below.

Usability issues were ranked from most to least critical and recommendations for an improved design were made to address the identified usability issues. Two of these issues were selected by the client for future study.

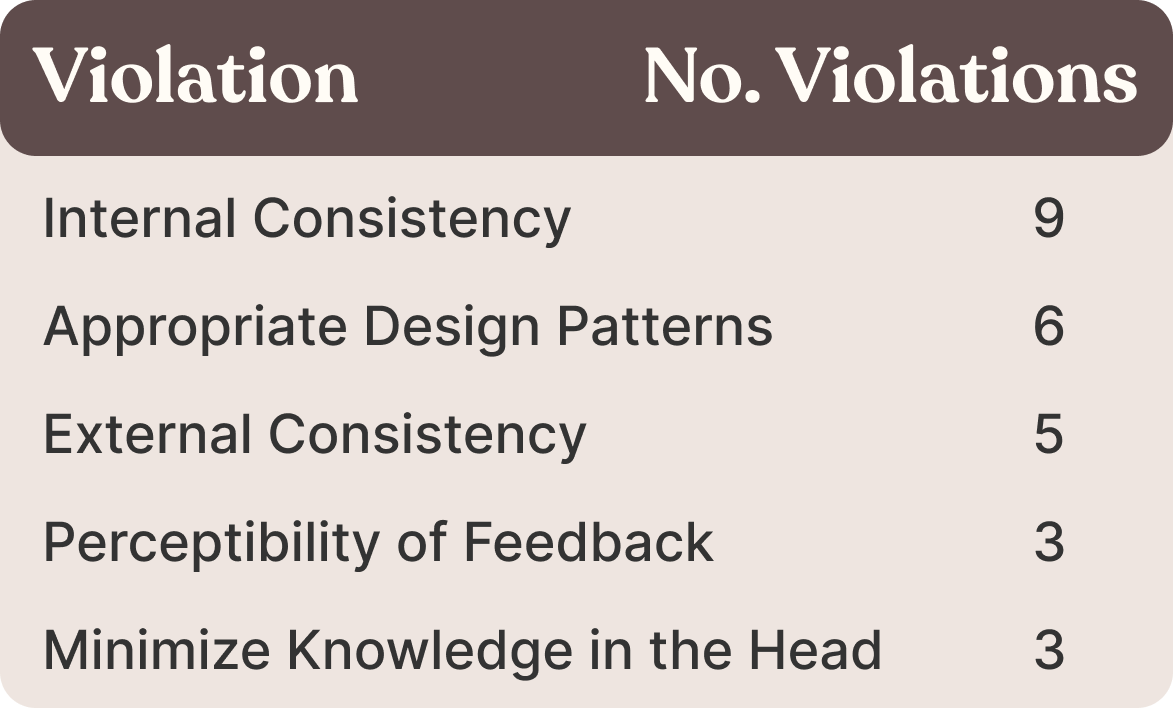

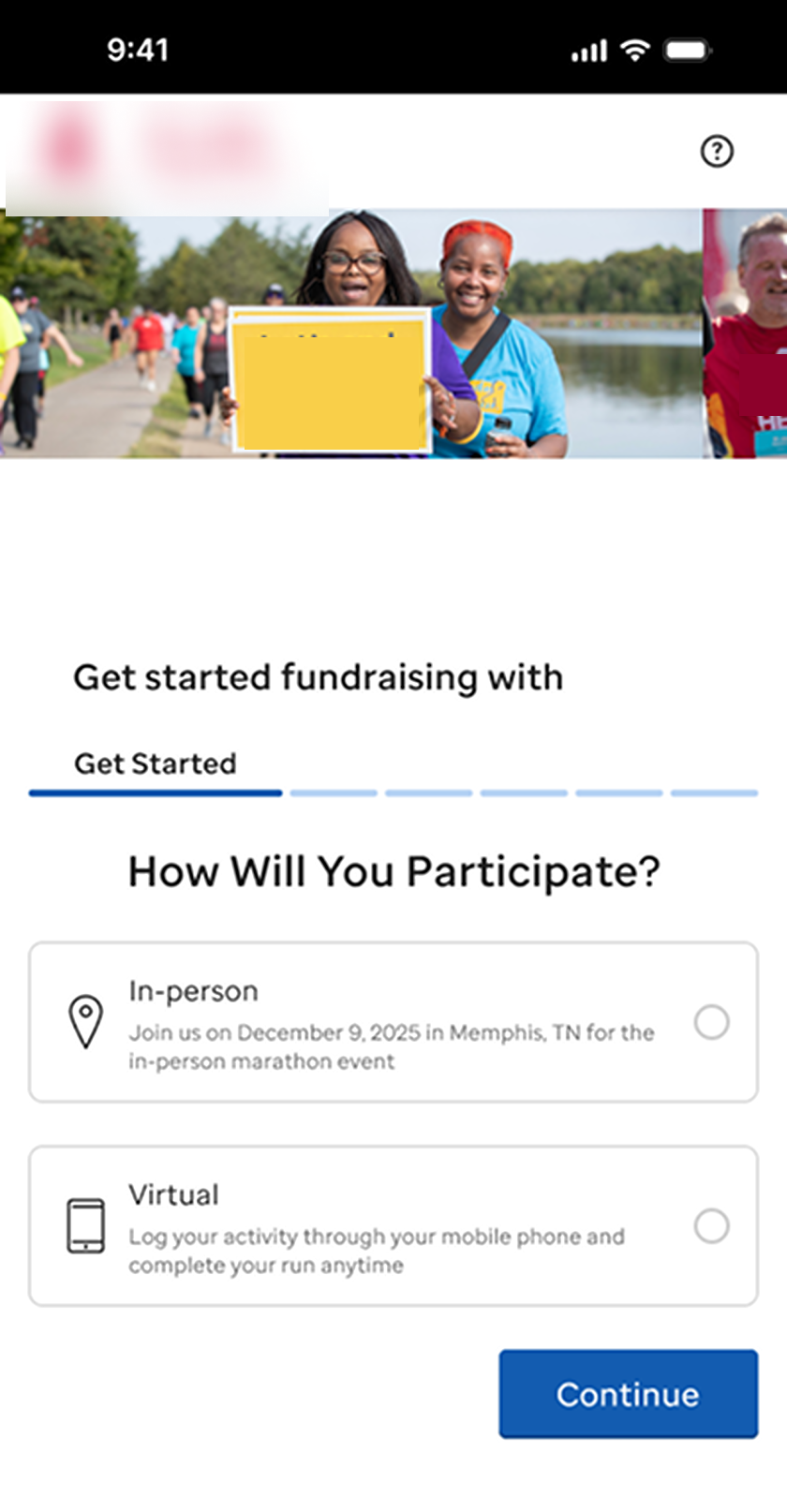

The onboarding process is external and tedious. Users may abandon the process, reducing participation in events and fundraising.

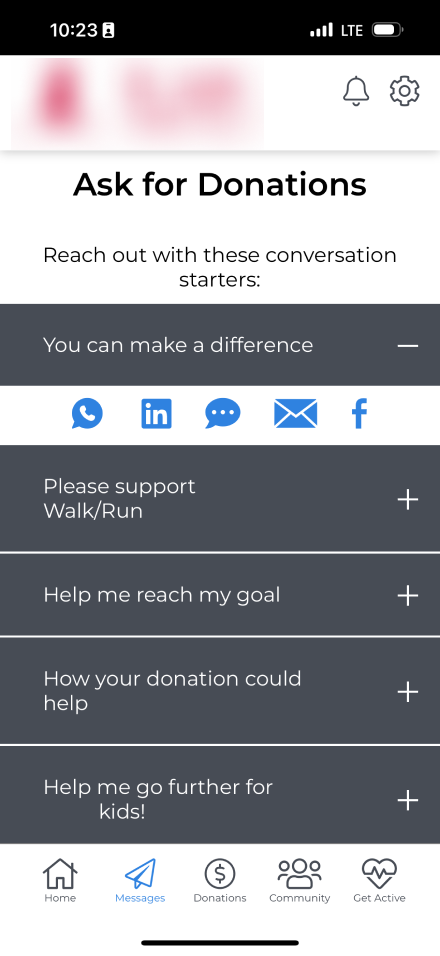

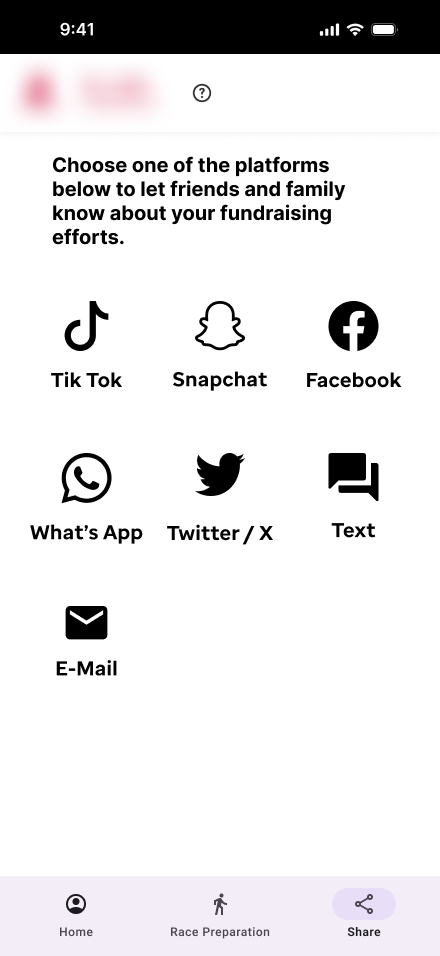

The process for selecting a social media message to share donation requests is unclear, which discourages users from sharing and negatively affects fundraising efforts.

To evaluate the effectiveness of our proposed improvements, participants were asked to interact with two different prototypes to perform the same tasks (a within-subjects design). One prototype represented the current application, while the other showcased an enhanced version based on insights from the heuristic study. This approach allowed participants to directly compare the two designs, offering valuable feedback on the differences.

To minimize the influence of transfer of learning, counterbalancing with randomization of first design exposed to ensured that any differences in user performance or feedback was not influenced by the order in which the designs were tested.

An unmoderated usability test was designed and recorded with UserTesting. Participants’ cameras and phone screens were recorded as they completed tasks in each prototypes. At the end of each task, participants were asked to rate its difficulty on a Likert-scale. At the end of the test, when all tasks had been completed on each prototype, participants were asked to compare their experiences between prototypes asked which they preferred.

The client created prototypes in Figma based on suggested design improvements. These prototypes did not support user-inputted information; instead, selections and text fields were pre-populated with default values that appeared when clicked. However, users were still encouraged to interact with the application as they normally would.

Register as an individual for a virtual event

Share a message on social media

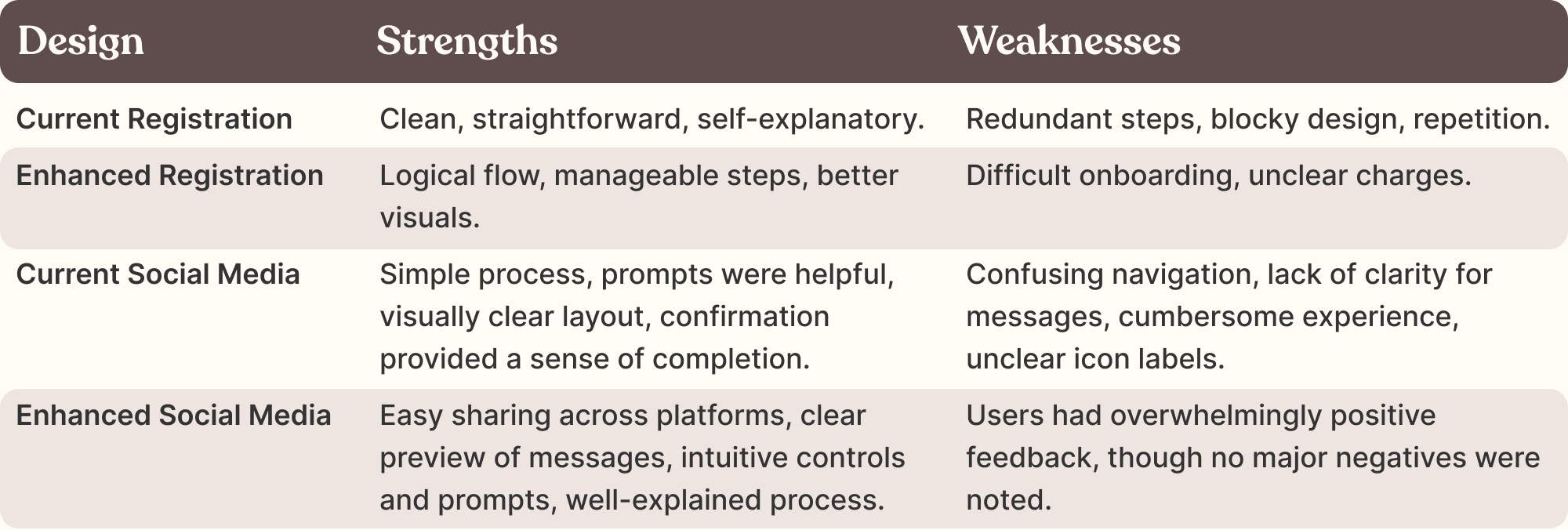

Users valued processes that felt simple, intuitive, and visually clear, highlighting the importance of streamlined navigation and logical flow. However, areas of friction, such as redundancy, unclear pathways, and a lack of intuitive guidance, revealed opportunities to improve.

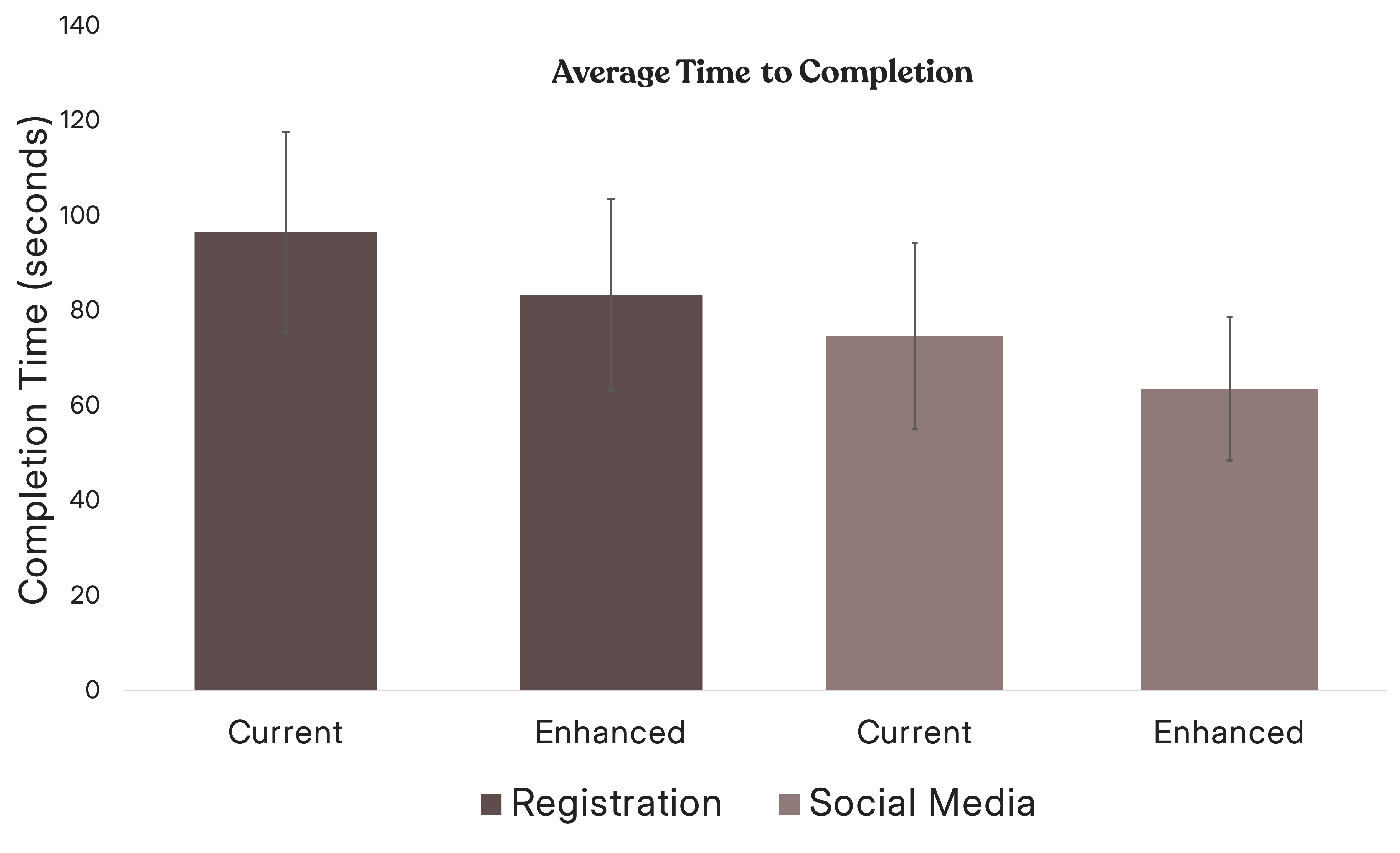

User task completion was slightly faster with the enhanced design for both tasks. This trend was not statistically significant, but it is important to explore it in future research.

error bars represent 90% CI

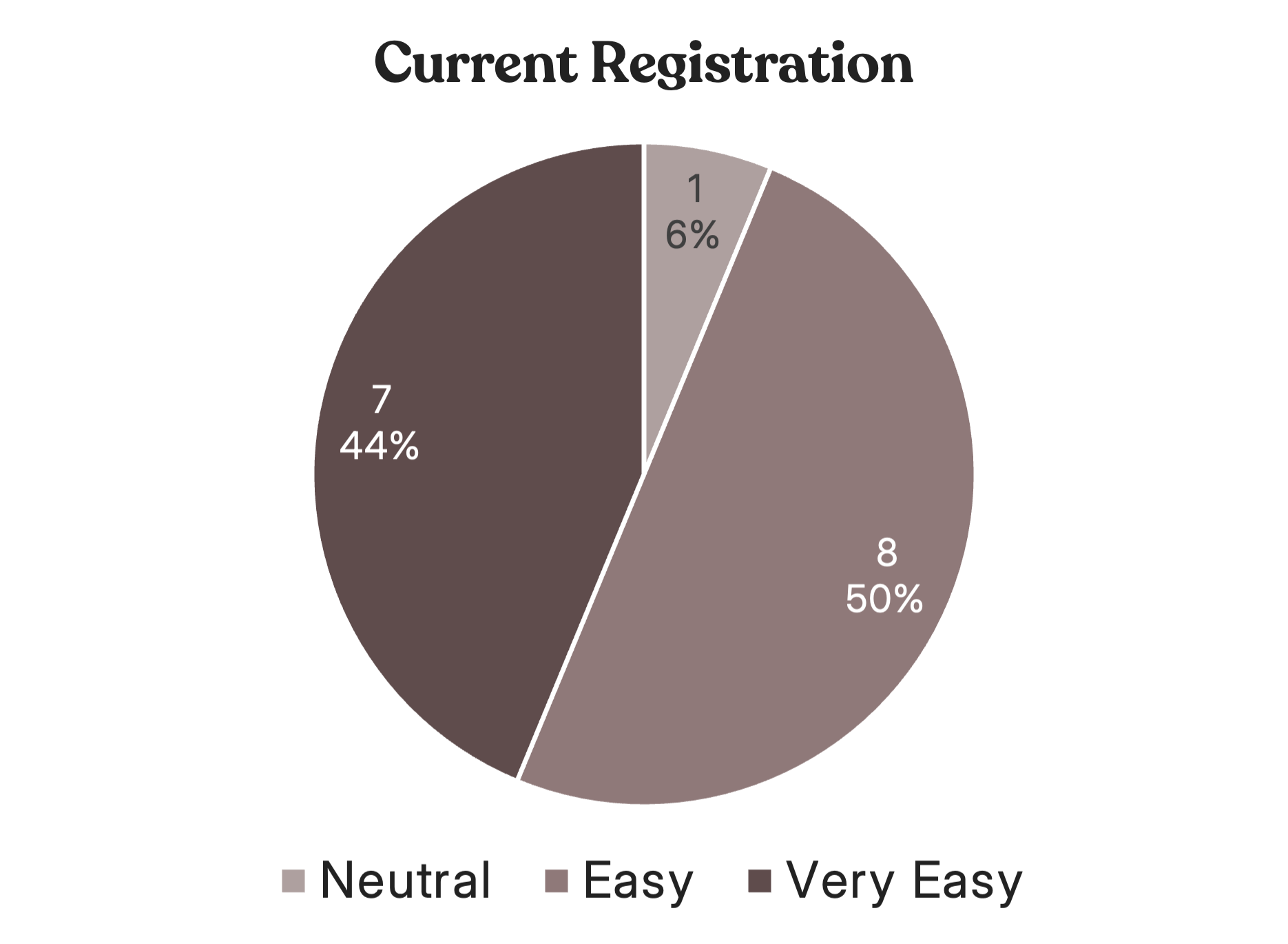

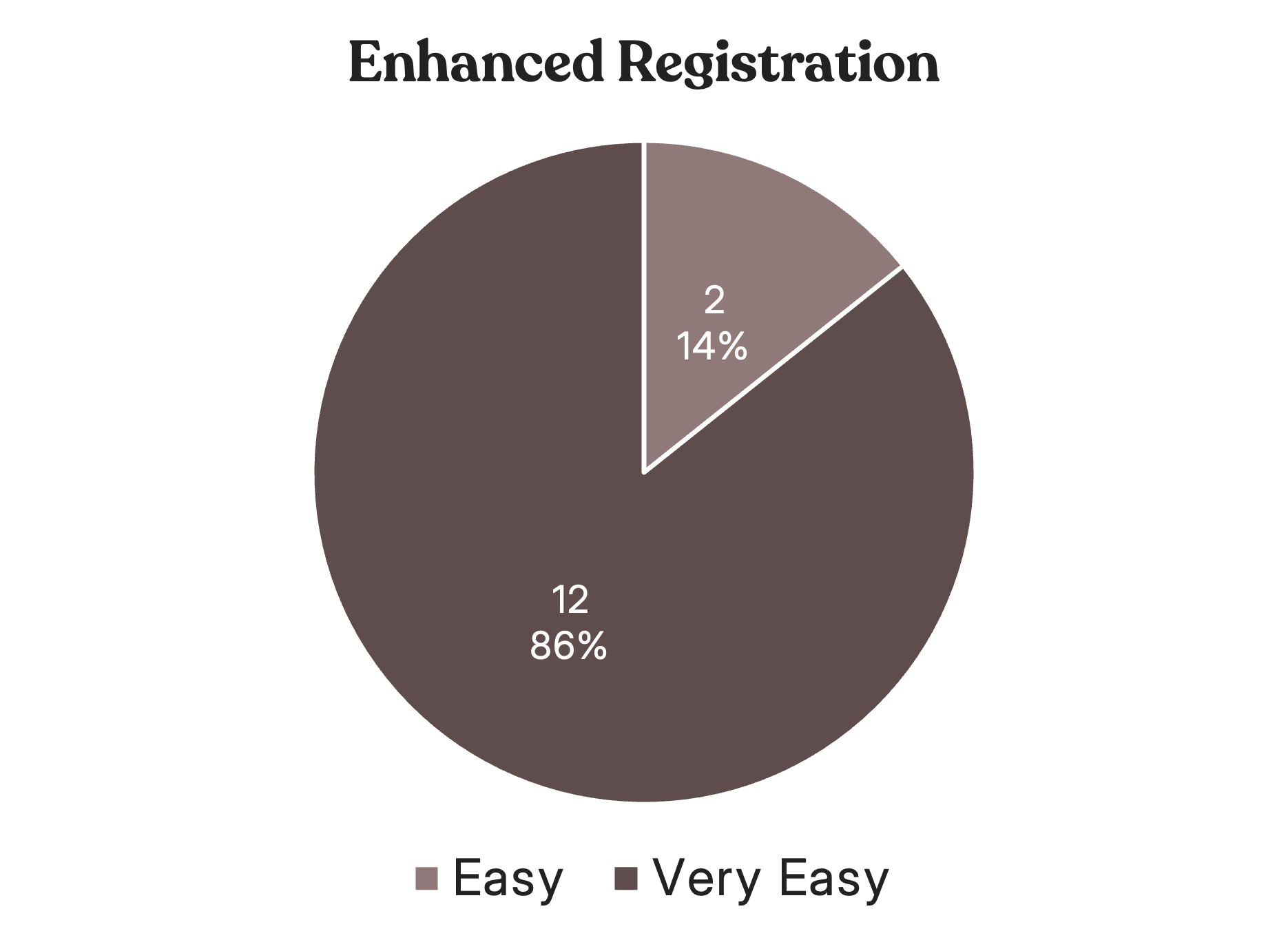

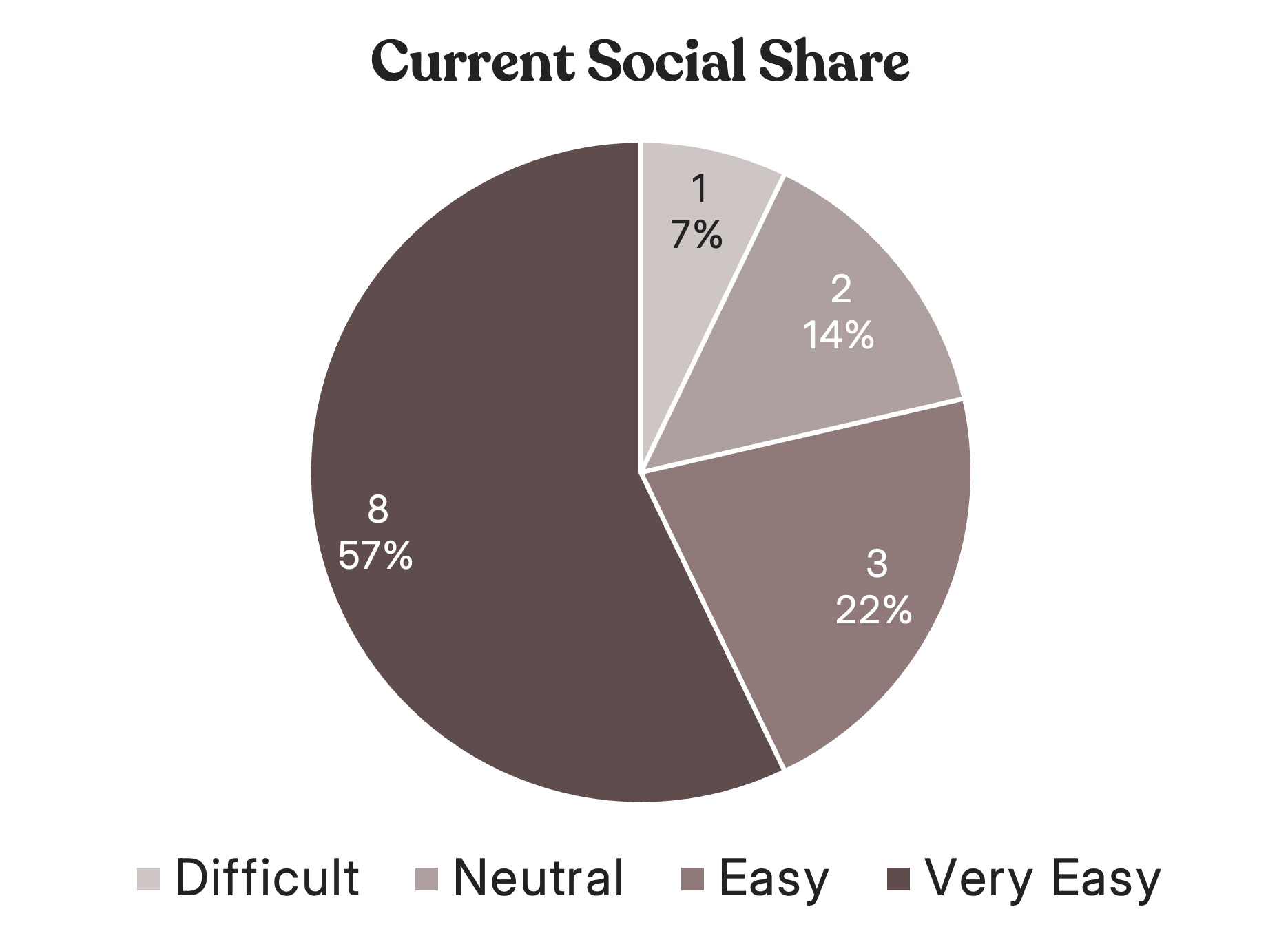

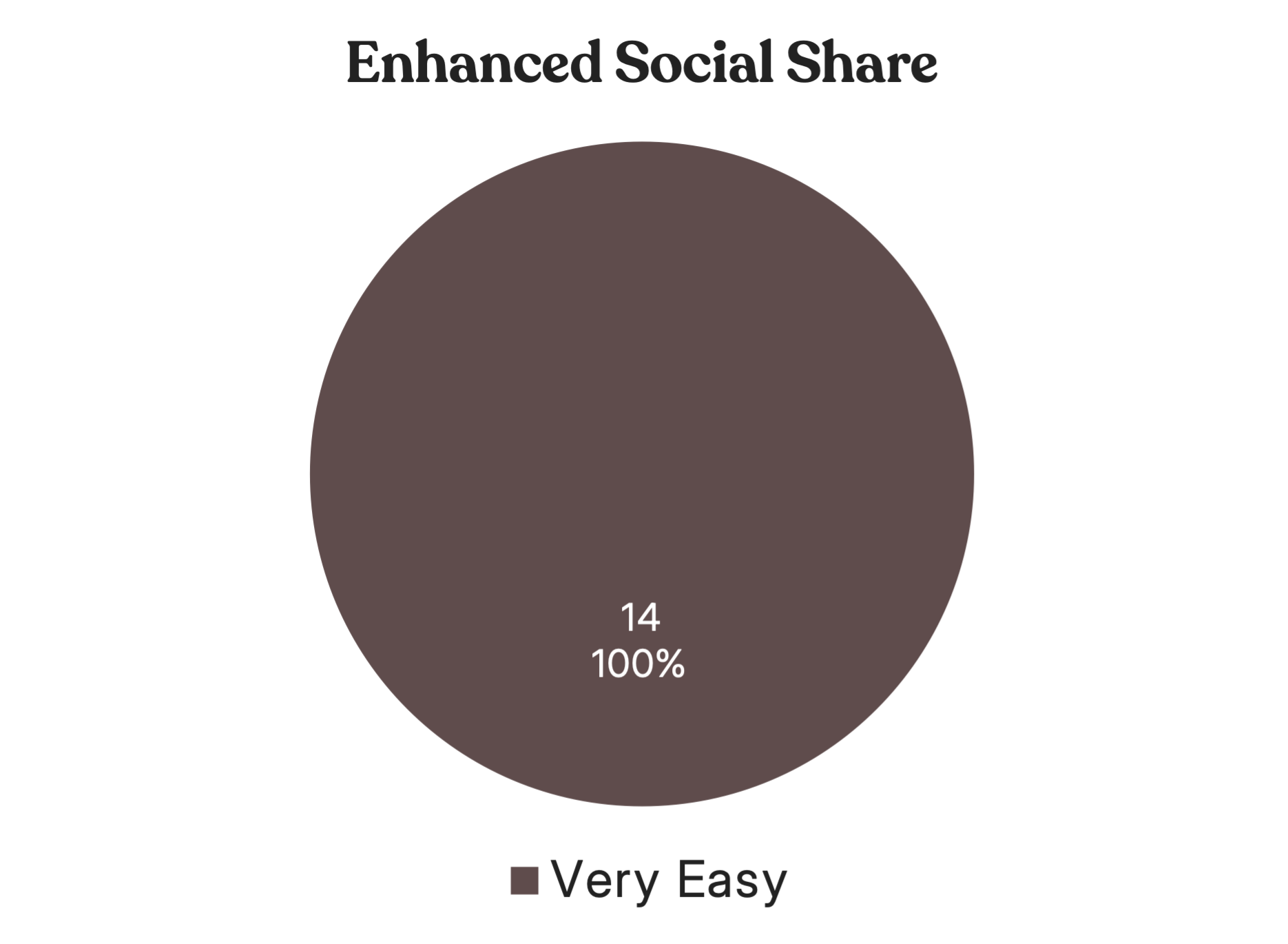

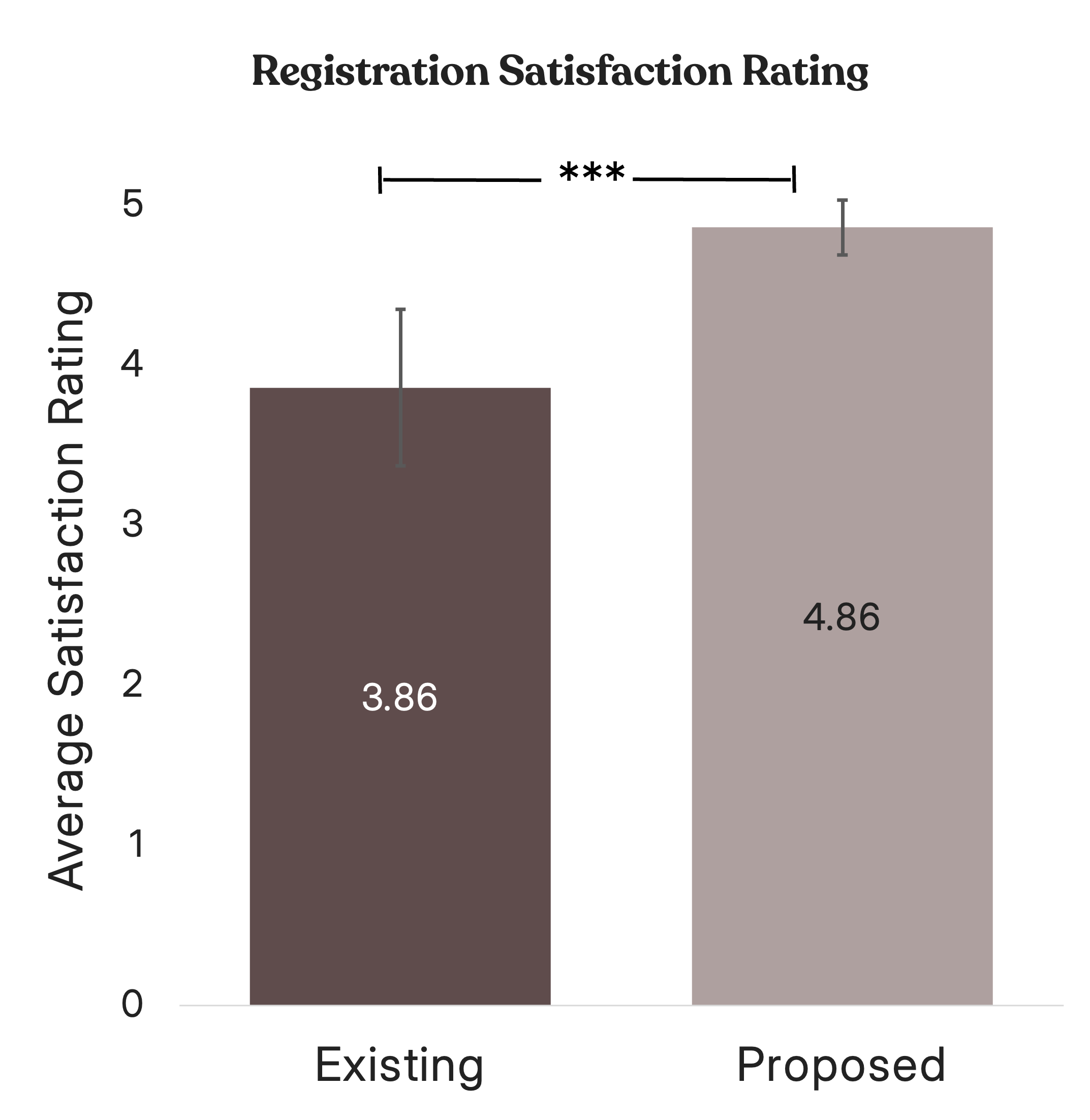

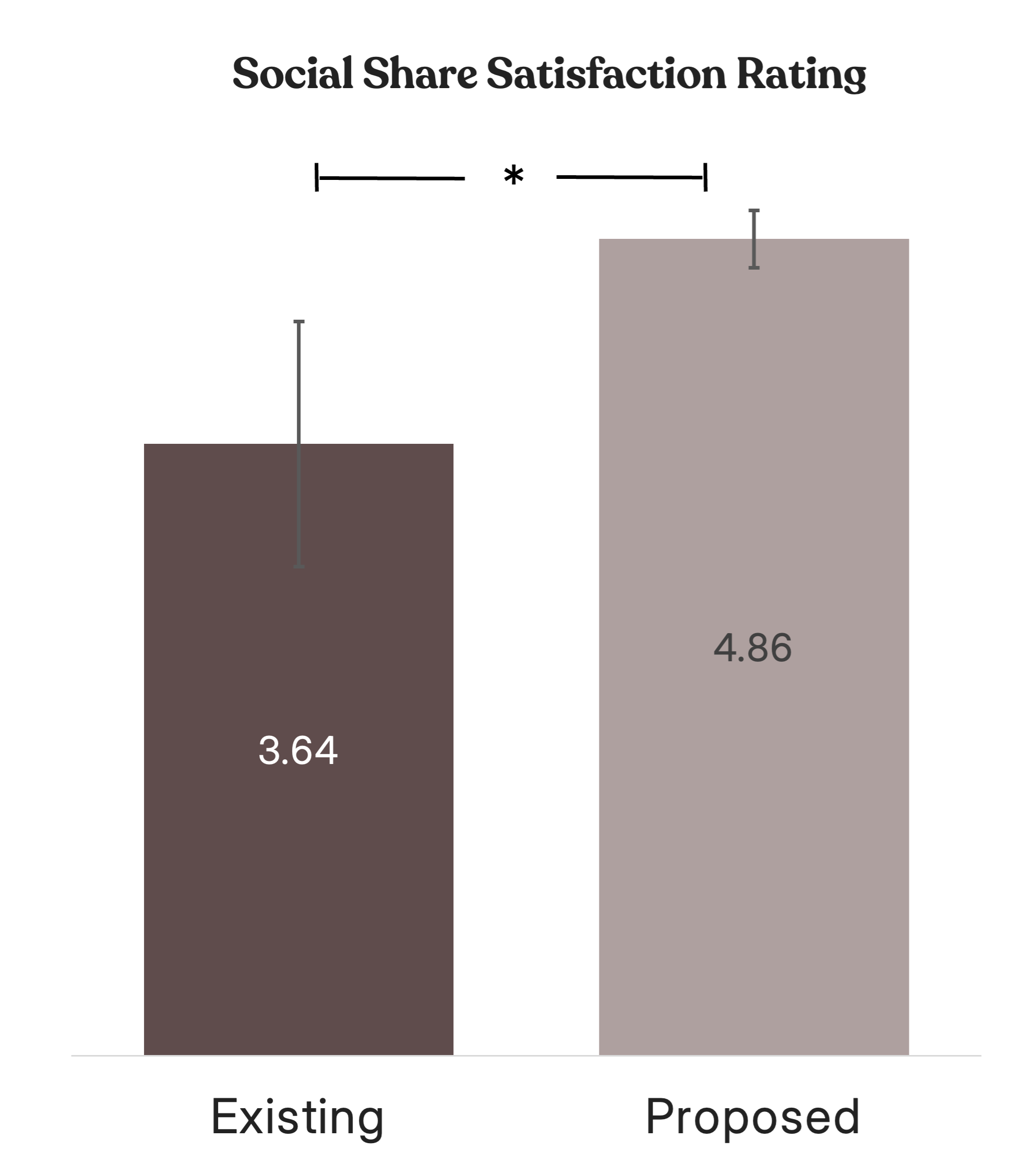

The difficulty ratings showed that users found performing tasks within the enhanced designs much easier to than the current versions. While the current designs were rated as more challenging, the enhanced versions significantly reduced friction and provided a smoother, more intuitive experience.

After completing all tasks, users were shown images of their previous experiences to help jog their memory. They were then asked which prototype they found easiest for task completion. Users consistently rated the enhanced prototypes as easier to use, with this difference being statistically significant for both the social sharing (p = .003) and registration tasks (p = .01).

error bars represent 90% CI

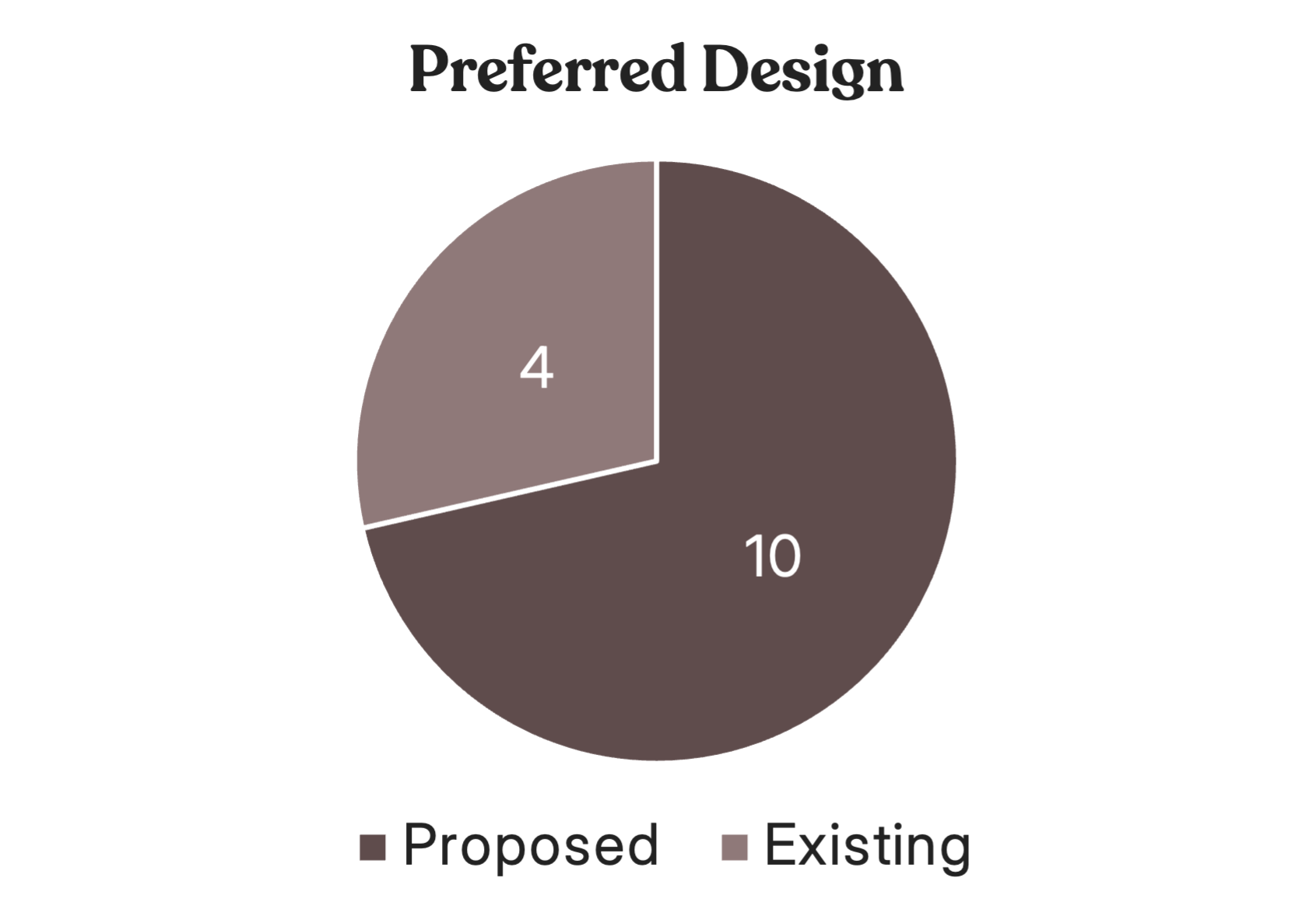

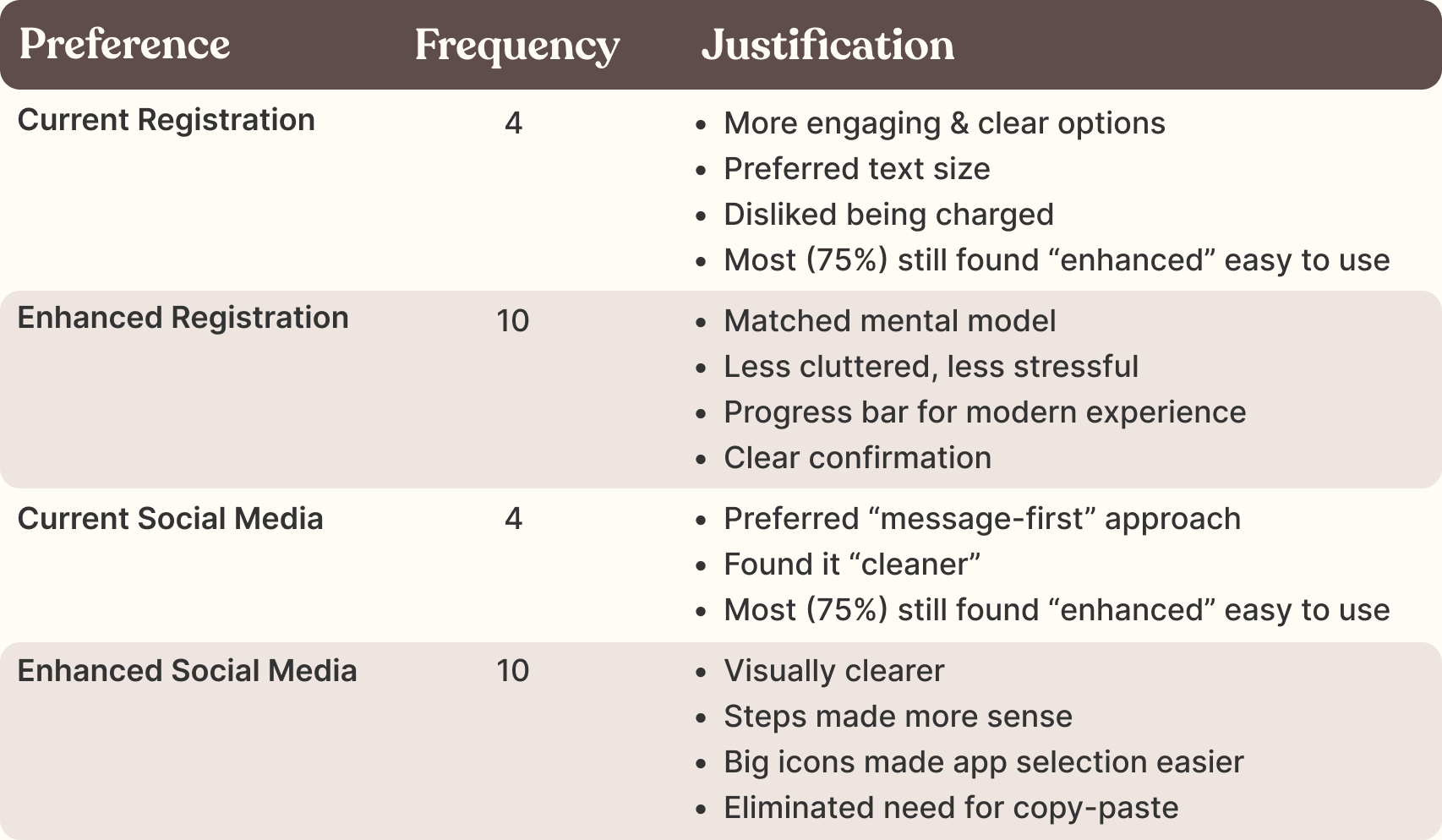

In the final question, participants were asked which prototype was the clearest overall for completing tasks. For both tasks, 10 out of 14 participants chose the enhanced prototype as their preferred option.However, participants who preferred the existing prototype did not consistently choose it for both tasks.

Participant's were asked to elaborate on their preference between the prototypes based on their selection. Those who did prefer the current prototype as opposed to the enhanced, still found the enhanced prototype easy to use. Their justification is summarized below.

Based on the data and analysis, the enhanced prototype consistently emerged as the preferred design across tasks demonstrating its ability to meet user needs more effectively. The majority of participants favored its clarity, user-friendly features, and alignment with their expectations. Although the existing prototype received some positive feedback, it was generally outperformed by the enhanced version in terms of usability and task efficiency.

These findings highlight the importance of iterative design processes that prioritize user feedback to create intuitive and effective solutions. Further refinement based on participant insights could further optimize the proposed prototype’s performance.